Frontier AI Auditing-Related Legislation in the US: Landscape, Challenges, and a Path Forward

Note: this article has not been updated to reflect legislative updates since its publication on April 20, 2026.

AI capabilities are advancing much faster than the scrutiny applied to this critical technology. A justified sense that AI companies are generally “checking their own homework” on safety and security fuels public skepticism and makes organizations more hesitant to deploy AI in high-stakes domains.

Self-assessment of AI safety and security, however well-intended and well-documented, cannot fully resolve the inherent conflict of interest at play. Bias does not require bad faith: sincere developers may unconsciously frame risks in ways that their existing mitigations already address. External auditors can more even-handedly compare practices across firms and bring a perspective less shaped by any one company's internal assumptions and culture.

While some companies voluntarily engage with third parties, voluntary uptake will continue to be uneven by default and to the extent auditing involves real costs, voluntariness could disadvantage the very companies trying to be proactive.

We and others have therefore proposed requiring that third-party auditors verify the claims that AI companies make about their technology and evaluate AI systems and company practices against relevant standards. Currently, there is no state or federal requirement to do this in the US – many legislators and AI company employees worry that auditing requirements would hinder innovation and that there aren't enough qualified auditors.

This post surveys the landscape of legislation related to auditing in the US and then summarizes the challenges that critics of audit requirements have pointed out. We then outline a path forward that addresses these challenges, and highlight the first audit-related bill that AVERI has chosen to endorse.

Terminology

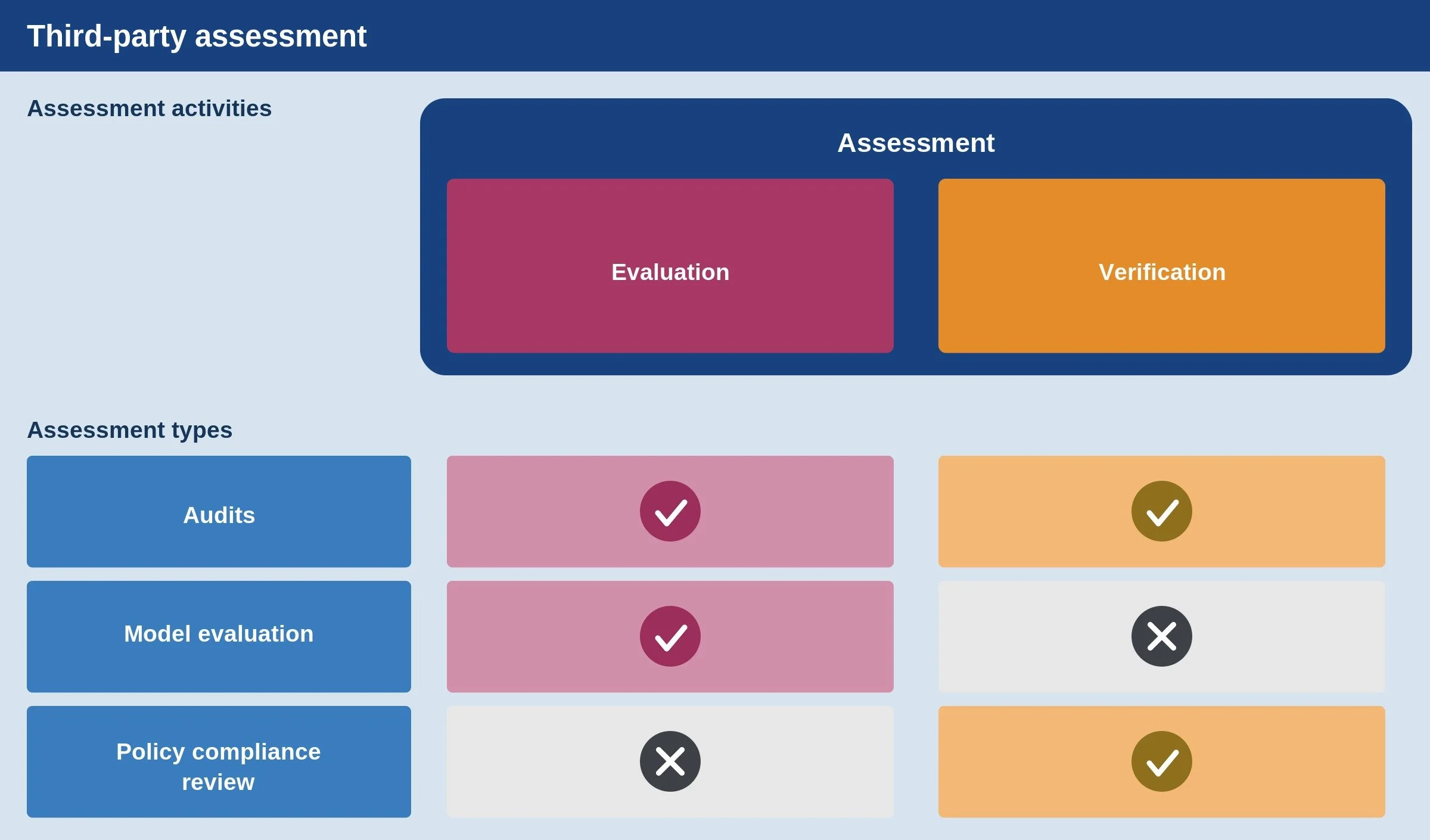

In line with our paper, “Frontier AI Auditing,” we use assessment as an umbrella term for analysis by third parties of a leading AI company’s systems and practices. Assessments involve one or both of two activities: verification of whether a certain claim is true or if a certain commitment is being upheld (e.g., a company following its own safety and security policies), and evaluation of the properties of a system or company’s practices with respect to one or more external standards (e.g., through technical analysis of a model or review of safety processes).

We believe that frontier AI auditing should involve a mix of verification and evaluation – independent auditors should be given access to non-public information that allows them to verify whether companies are doing what they say they’re doing and to evaluate systems and practices against applicable external standards. For the purposes of this post, though, we treat all requirements for external assessment as “audit-related” even if they don’t include all the elements of our definition.

The Legislative Landscape

As of today, the U.S. landscape includes a mix of enacted transparency laws, vetoed and proposed audit requirements, proposals to create voluntary audit frameworks, and the commissioning of a report on a voluntary framework.

Among other elements of these laws, SB 53 in California (currently in force) and the RAISE Act in New York (taking effect in 2027) require companies to have and publish safety and security policies. SB 53 and the RAISE Act also require companies to disclose what role, if any, third-party evaluators play in their safety and security practices. These are important foundations (e.g., auditors could verify whether safety and security policies are being followed, and if third parties are involved, transparency about the process can help inform other companies on how to follow suit). And the RAISE Act goes slightly further by requiring that policies be detailed. But neither actually requires working with third parties and neither has a substantive quality bar for safety and security policies.

Most recently, Virginia’s HB 797 directed the Joint Commission on Technology and Science to study the feasibility and impact of an Independent Verification Organization (IVO) framework, which would give liability benefits in exchange for participation in a voluntary audit regime. It does not itself establish that framework, though the report – due later this year – will be a valuable resource.

Several proposed bills would go further, but have not been passed or signed into law for reasons we discuss in the next section. The table below summarizes the landscape of enacted, defeated, and proposed audit-related legislation. We omit some arguably relevant legislation — for example, requirements for governmental rather than private testing and legislation not focused on frontier AI systems.

| Legislation | Impact | Trigger | Type of risks addressed | Standard | Status |

|---|---|---|---|---|---|

| SB 53 (California, 2025) | Light encouragement via transparency | Developers meeting compute thresholds (1026 FLOPs) and revenue thresholds ($500 million) | Catastrophic risks | N/A | Enacted (in force now) |

| RAISE Act (New York, 2025) | Slightly stronger encouragement via transparency | Developers meeting compute thresholds (1026 FLOPs) and revenue thresholds ($500 million) | Catastrophic risks | N/A | Enacted (in force next year) |

| SB 1047 (California, 2024) | Requirement (annual) | Developers who trained a model meeting compute threshold (1026 FLOPs) and training expense threshold ($100 million) | Catastrophic risks | Not posing “unreasonable risks of causing or materially enabling critical harms” | Vetoed |

| SB 813 (California), HB 628 (Ohio) – grouped together due to similarities | Create a voluntary Independent Verification Organization (IVO) framework | N/A (voluntary) | Personal injury, reasonably foreseeable harm, property damage | Government-certified IVOs would develop standards | Pending |

| HB 797 (Virginia) | Study the creation of an Independent Verification Organization (IVO) framework | N/A (voluntary) | Personal injury, property damage | Government-certified IVOs would develop standards | Enacted |

| HB 4668 (Michigan, 2025) | Requirement (annual) | Developers meeting training expense threshold for one model ($5M) and overall compute expense threshold ($100M in the past 12 months) | Catastrophic risks | Verification against own policies | Pending |

| VET AI Act (federal) | Create voluntary auditing standards | N/A (voluntary) | Privacy, harm mitigation, data quality, documentation and communication, and governance | Out of scope (the focus is on standards for the auditing process rather than standards for safety and security itself) | Pending |

| HB 3506 (Illinois, 2025) | Requirement (annual) | Developers meeting training expense threshold ($100M) | Catastrophic risks | Verification against own policies | Did not pass legislature |

| HB 4705/SB 3261 (Illinois, 2026) | Requirement (annual) | Developers meeting compute thresholds (1026 FLOPs) and revenue thresholds ($500 million) | Catastrophic risks and child safety | Verification against own policies | Pending (discussed further in final section) |

Challenges with Audit Requirements

In response to the bills above and others (e.g., earlier versions of the RAISE Act that included audit requirements), AI companies, their trade associations, and aligned commentators have often opposed audit requirements. We explain the most critical objections that have been raised below, before proposing ways to address them.

First, companies point to the lack of a clear standard for safety and security against which their systems and practices can be measured. Without such standards, some companies worry, audit results may raise concerns among customers without actually driving progress toward industry-wide safety and security. In other words, there is no clear “success condition” for a comprehensive audit (one that goes beyond verifying compliance with a company’s own policies), making planning for such audits difficult. Companies worry that decontextualized findings will generate confusion and reputational harm without producing much real safety and security value.

Second, companies have expressed concern about an audit supply problem: who will carry out these audits, and with what expertise? A big fraction of expertise on frontier AI is concentrated in the very companies being audited, and what outside expertise exists could be stretched thin by requiring too many audits too quickly. If that happens, unqualified vendors could rush into the market offering “rubber stamp” audits which create a false sense of confidence or detract from more important safety and security investments.

Third, companies worry about protecting sensitive intellectual property and user data, since auditing by definition involves access to non-public information. The recently launched AI Evaluator Forum has begun to articulate initial standards for appropriate access, and in our work we have discussed different tiers of access and related information protections. But given the early state of these standard-setting processes, it is reasonable for companies to worry that the nascent auditor ecosystem might do a poor job protecting the critical information that auditors would sometimes be entrusted with in the course of an audit, particularly if too many audits are required at too many companies too soon.

Finally, there is the problem of burdensomeness. Companies worry that audits will hinder innovation by creating excessive compliance burdens. Without careful design, audits could impose large time burdens on already-stretched internal teams, and could disproportionately burden smaller companies. For example, audits might require employees to perform engineering work in order for auditors to access certain information securely, gather extensive documentation of or produce novel summaries of certain processes, or be interviewed about company systems and practices.

A Path Forward

The challenges described above are real but surmountable. And the high stakes of insufficient scrutiny demand that we find a path forward.

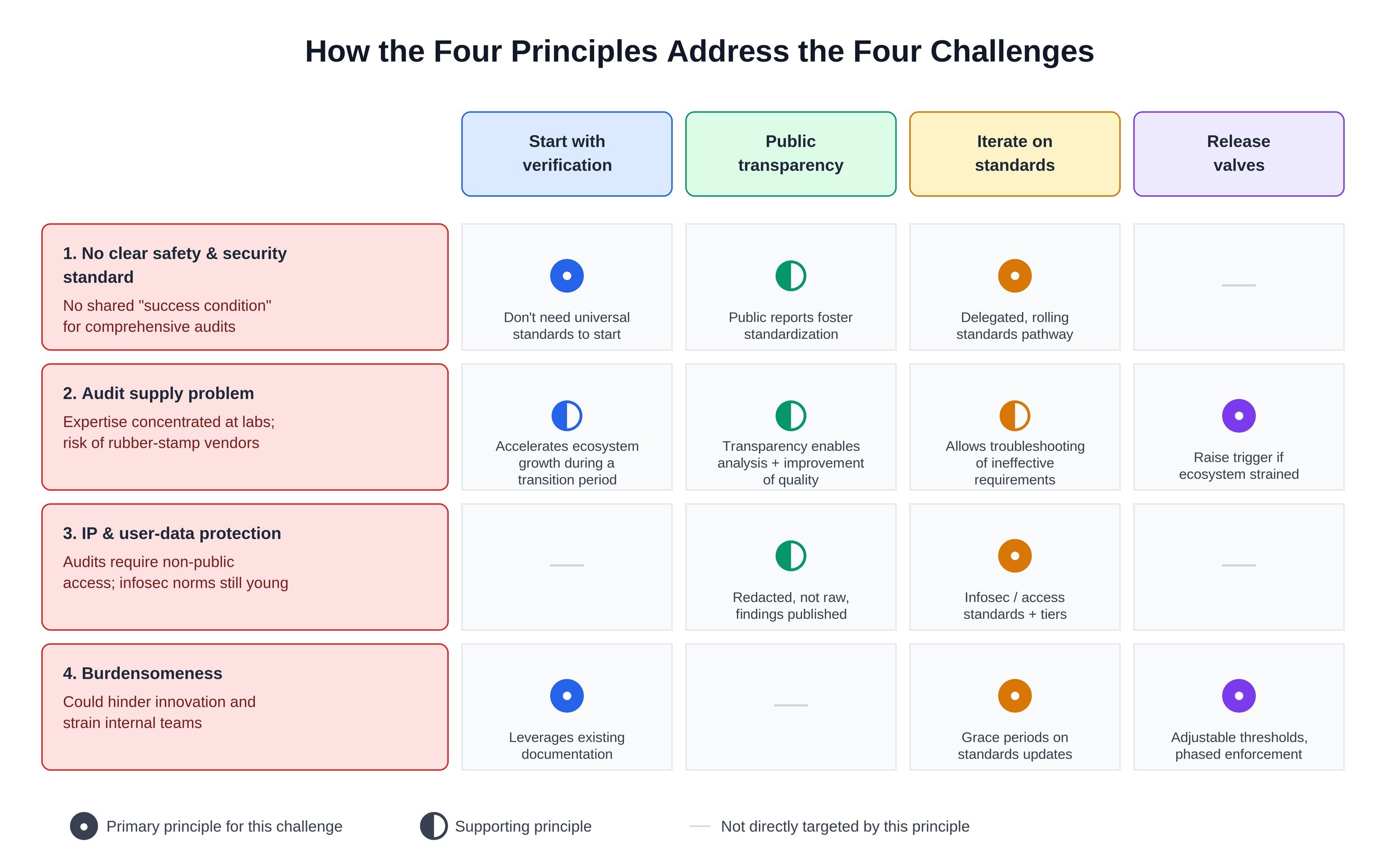

Well-designed public policies can move us, step by step, toward effective and universal frontier AI auditing, and avoid a race to the bottom on safety and security. Below we propose four principles that can inform the design of legislation that requires frontier AI auditing.

Note that we assume in each case that these requirements will be layered on top of existing transparency requirements like SB 53 and the RAISE Act, so we will not repeat some of the key points that arose when crafting such requirements (e.g., the need for focusing on the leading AI companies, which partially addresses the concern about burdensomeness disproportionately affecting small companies).

The figure below summarizes how our four principles help overcome the challenges discussed above.

Start by verifying compliance with company policies

Waiting for comprehensive, industry-wide safety and security standards before getting started would be a mistake given the rapid pace of AI’s development and deployment, and the urgency of many related risks. Fortunately, universal standards aren’t needed in order to get started.

The path forward involves building on what already exists: company-level policies on safety and security. Auditors can verify companies’ compliance with their own policies and share appropriately redacted versions of their findings, building institutional capacity that can later support auditing against industry-wide standards.

Setting a clear timeline for this kind of verification will help stimulate growth and investment in the auditing ecosystem. Importantly, while verification is a key first step, company policies may be too weak by default, so this transition phase should not last forever. The same legislation can set one timeline for verifying compliance with companies’ own policies and a separate, later timeline for evaluation against common standards.

Lean into public transparency

Under the definitions used above, an audit involves assessing non-public information, and that specific information can’t be published by auditors. But the redacted versions of audit findings and details on the audit process should be published in order to hold auditors publicly accountable for conducting rigorous analysis rather than checkbox exercises.

Note that this principle differs from some proposals under which auditors would send audit reports only to regulators – an approach we think could encourage checkbox compliance due to limited feedback loops. Regulators should have access to unredacted results, but public redacted results are necessary in order to build an industry-wide understanding of AI safety and security.

For example, an auditor's report might note that a company withheld certain information on the grounds of that information being sensitive and that, as a result, the auditor could not determine whether a key policy commitment had been met. Publishing that conclusion would not itself reveal the confidential company information, but it would provide fodder for public discussion about the balance between assurance and burdensomeness, and direct researcher attention towards better privacy-preserving access mechanisms.

Similarly, auditors should be required to publish credentials and disclosures of conflicts of interest. This transparency will help enable informed discussion of audit supply, demand, and quality.

Iterate on standards

Rather than writing detailed rules for safety and security – and for the auditing process itself – into legislation today, policymakers can instead designate one or more sources of formal standards or more informal best practices (we refer to both categories collectively as “standards” below). For example, legislation might require a government official or agency to either promulgate standards or designate a source of standards, and to do so six months after passage of the legislation. There may be multiple organizations involved in sorting out the details of such standards – e.g., one organization could be responsible for defining audit process standards and another could be responsible for defining information security standards.

Various public and private organizations exist that could produce or inform standards for AI safety and security and auditing thereof. These include the Center for AI Standards and Innovation (CAISI), the Frontier Model Forum, and the AI Evaluator Forum. Illustratively, CAISI could promulgate official standards on safety and security and the auditing thereof; the Frontier Model Forum (a group of leading AI companies) could be a source of standards for internal risk mitigations and for different components of safety and security policies, which could inform CAISI standards; and the AI Evaluator Forum (a group of assessment organizations) could also be a source of input for CAISI’s standards or a source of standards in its own right. Many permutations are possible, though the key takeaway here is that there are foundations to build on.

To balance rapid iteration with regulatory predictability, standards could be applied in a rolling fashion with grace periods. For example, suppose that a standard is updated in the middle of an ongoing audit. Audit legislation could give companies the option of either immediately switching to a new set of standards or completing an ongoing audit using the prior set of standards for up to one quarter after the announcement.

Early in the development of frontier AI auditing, the most urgent issues should be prioritized for standardization – ones that directly relate to key challenges discussed above. For example, establishing a clear way of adjudicating disagreements between companies and auditors about access and redaction should be a high priority, whereas standardizing audit report formats is less critical in the near term, as long as key components are somehow included in the reports.

Bake in release valves

Clear deadlines and economic incentives would focus attention and investment in the auditing ecosystem, so we are optimistic that the audit supply problem can be solved relatively quickly (e.g., we believe that it would be reasonable to require 5-10 frontier AI companies to be audited starting in early 2027). There is significant latent capacity (e.g., in academia) that can be brought to bear in achieving this or even more ambitious auditing timelines, and there is a growing network of for-profit and non-profit assessment organizations. But it is impossible to predict which audit providers will emerge by what time with certainty, and audit-related legislation could accommodate this uncertainty through temporary “release valves,” providing flexibility in response to new information – for example, if the auditing ecosystem is straining to meet demand at a high level of quality.

Two key types of release valves that policymakers should consider are:

Adjusting the threshold that triggers audit requirements. If, per credible public research, the audit ecosystem is being stretched too thin as a result of many companies needing to be audited, the threshold for triggering an audit requirement could be raised in order to focus more narrowly on the sources of greatest potential risk.

Delaying enforcement of new standards. If standard-setting bodies don’t move quickly enough for regulators to have confidence in going beyond verification, the verification-only period could be extended.

Release valves would ideally be triggered by objective metrics, to reduce the risk of them being triggered as a result of political pressure. And the further in the future auditing requirements come into force, the less it makes sense to include release valves, since there will be more time to prepare.

Our First Endorsement and Next Steps

An audit requirement based on the principles above would help reduce safety and security risks from AI and build user and investor confidence in this increasingly critical industry.

Getting legislation along those lines passed will require different stakeholders to compromise, and we have been heartened to hear from contacts across industry, civil society, and government that there is openness to such a path.

Toward this end, AVERI recently endorsed legislation for the first time, specifically HB 4705/SB 3261 in Illinois. In line with recent state legislation trends, HB 4705/SB 3261 builds directly on the framework established in SB 53 and the RAISE Act in order to address concerns about a patchwork of conflicting requirements.

This legislation reflects many of the ideas discussed above – for example, it focuses on verification of compliance with companies’ own policies – and would represent significant progress. We are appreciative that the legislators involved are receptive to feedback on how to ensure the legislation creates clear, focused requirements.

We have been glad to see Anthropic also endorse this bill and OpenAI favorably discuss auditing in general in a recent publication. We hope these recent developments indicate the beginning of more robust industry support for auditing, and that the principles above can help us move toward effective and universal frontier AI auditing, step by step. We look forward to continued engagement with various stakeholders about the details of audit requirements and auditing standards.